Assignment

4

Probabilistic Models of

Human and Machine Intelligence

CSCI 5822

Assigned Thu Feb 22

Due Thu Mar 1

Goal

This

assignment will give you practice in determining conditional

independence and performing exact inference.

PART I

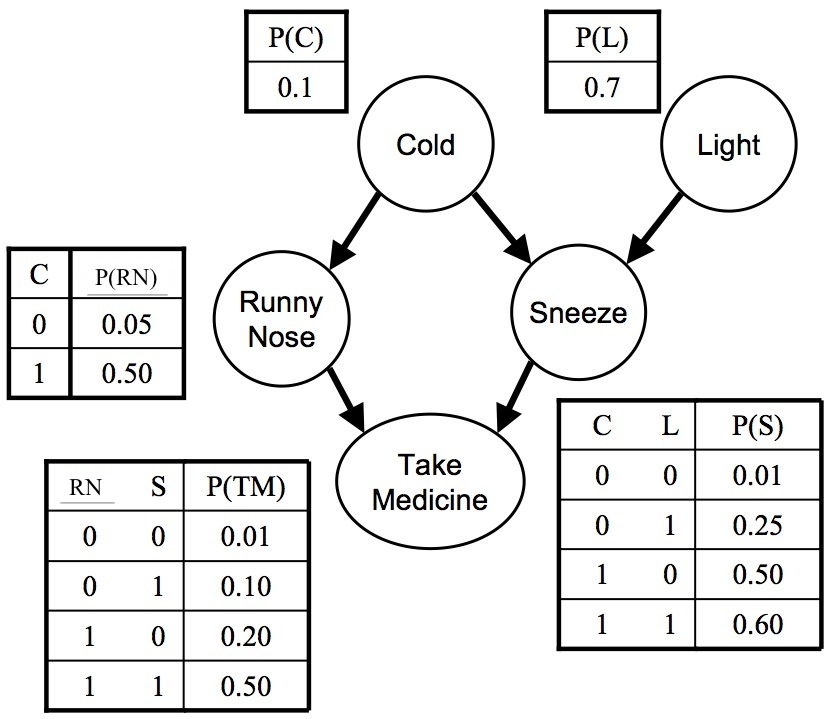

Consider

the Bayes net with 5 binary random variables. (Thank you

to Pedro Domingos of U. Washington for the figure.)

1. What is the Markov blankot of Sneeze?

2. What is the Markov blanket of Take Medicine?

3. Write the expression for the joint probability, P(L, C, RN, S, TM), in terms of the conditional probability distributions.

4. Using your formula in part 3, compute P(C=1 | TM=1, RN=0, L=0). Remember the definition of conditional probability: P(X | Y) = P(X, Y) / P(Y). Y can refer to multiple random variables.

5. Draw the equivalent Markov net by moralizing the graph.

6. Draw the equivalent factor graph.

7. Write the joint probability function for the Markov net, with one term per maximal clique. Show the correspondence between the potential functions in this equation with the conditional probability distributions in question 3.

8. Is the Bayes net above a polytree? If not, what links might you add or remove to make it into a polytree?

9. Which of the following are true:

(a) C ? TM | RN, S

(b) TM ? C | S

(c) C ? L

(d) C ? L | TM

(e) RN ? L | TM

(f) RN ? L

(g) RN ? L | S

(h) RN ? L | C, S

As explained in class, the expression "X ? Y | Z" means X and Y are independent when conditioned on Z.

1. What is the Markov blankot of Sneeze?

2. What is the Markov blanket of Take Medicine?

3. Write the expression for the joint probability, P(L, C, RN, S, TM), in terms of the conditional probability distributions.

4. Using your formula in part 3, compute P(C=1 | TM=1, RN=0, L=0). Remember the definition of conditional probability: P(X | Y) = P(X, Y) / P(Y). Y can refer to multiple random variables.

5. Draw the equivalent Markov net by moralizing the graph.

6. Draw the equivalent factor graph.

7. Write the joint probability function for the Markov net, with one term per maximal clique. Show the correspondence between the potential functions in this equation with the conditional probability distributions in question 3.

8. Is the Bayes net above a polytree? If not, what links might you add or remove to make it into a polytree?

9. Which of the following are true:

(a) C ? TM | RN, S

(b) TM ? C | S

(c) C ? L

(d) C ? L | TM

(e) RN ? L | TM

(f) RN ? L

(g) RN ? L | S

(h) RN ? L | C, S

As explained in class, the expression "X ? Y | Z" means X and Y are independent when conditioned on Z.

PART II

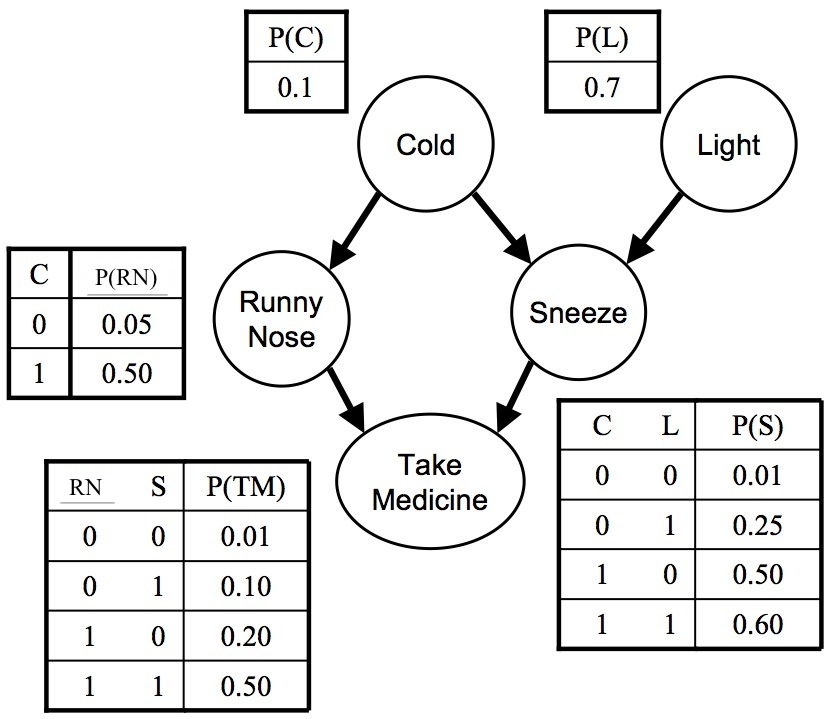

1. Write the joint distribution over A, D, U, H, and P in this graphical model (from Barber, Fig 3.14). Ignore the shading of H and A.

2. Write out an expression for P(H) as a summation over nuisance variables in a manner that would be appropriate for efficient variable elimination. (Don't do any numerical computation; simply write the expression in terms of the conditional probabilities and summations over the nuisance variables. Arrange the summations as we did in the variable elimination examples to be efficient in computing partial results.)

3. Write out a simplified expression for P(U=u | D=d) in a manner that would be appropriate for variable elimination. By 'simplified expression', I mean to reduce the expression to the simplest possible form, eliminating constants and unnecessary terms.

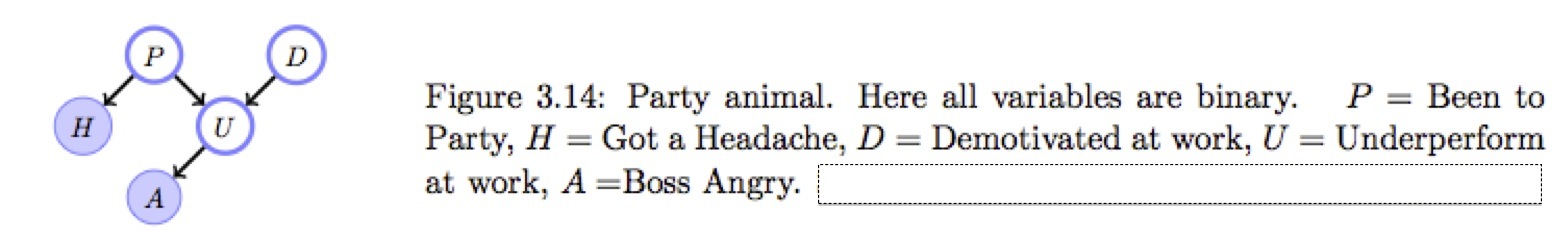

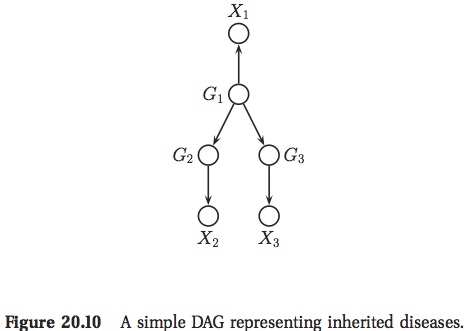

PART III

The

Bayes net below comes from Kevin Murphy's text (Exercise 20.3).

1. Compute P(G1 = 2 | X2 = 50)

2. Compute P(X3 = 50 | X2 = 50)

1. Compute P(G1 = 2 | X2 = 50)

2. Compute P(X3 = 50 | X2 = 50)